Why Spotify has no button to filter out AI music

Getty Images

Getty ImagesIn mid-2025, frustration boiled over for Cedrik Sixtus.

Finding his Spotify playlists increasingly sprinkled with tracks he suspected were AI generated, the Leipzig-based software developer built a tool to automatically label and block them from his listening.

He uploaded his Spotify AI Blocker to a couple of code-sharing websites, where hundreds have downloaded it.

It filters out a growing list of more than 4,700 suspected AI artists, drawing on already existing community tracking efforts, and signs like unusually high release volumes and AI-style cover art, supplemented with external detection tools.

"It is about choice – if you want to hear AI music or if you don't," says Sixtus who would prefer Spotify labelled and enabled filtering of AI-generated content itself.

Sixtus's tool is installed initially via the web browser version of Spotify. He warns that using his software "may violate Spotify's terms of service".

He isn't alone: feelings run deep on the community forum of the world's most popular music streaming service.

While for Sixtus the issue is that AI music doesn't sound right, others simply don't want to listen to music made by a bot.

Spotify has made some concessions to address such concerns.

In April it launched a test feature which shows, in a song's credits, how an artist used AI. But it's a voluntary system based on what an artist tells their record label or distributor.

"We know this isn't a complete solution on its own. Building a truly comprehensive system is a challenge that requires industry-wide alignment," Spotify said in April.

Spotify's position is certainly a long way from actively identifying AI-generated music and giving users an option to filter it out.

"It is a difficult – borderline existential – balancing act for Spotify," says Robert Prey, who studies streaming platforms at Oxford University's Internet Institute.

Spotify is trying to avoid value judgments about how music is created, but risks eroding trust among listeners, artists and the wider industry if it fails to offer enough transparency, he explains.

"It has to figure out what listeners want and how artists feel – all while AI is improving, being used more widely and becoming harder to detect," he adds.

The arrival of AI tools for music is both seducing and unsettling the music world.

Generative AI music services like Suno and Udio now produce increasingly polished, fully realised songs, complete with lyrics, vocals and instrumentation from simple text prompts in seconds.

In one recent controlled test, part of a Deezer–Ipsos poll, 97% of listeners failed to correctly distinguish between AI-generated and human-made tracks.

And tens of thousands of the AI tracks appear to be uploaded to streaming platforms daily, where they could dilute revenue pools for human artists – even if most currently attract few listens.

Spotify, along with YouTube Music and Amazon Music, have so far avoided any clear user-facing labels or filters for AI-generated music, neither openly using detection tools nor requiring systematic self-disclosure – though that may change as industry standards develop.

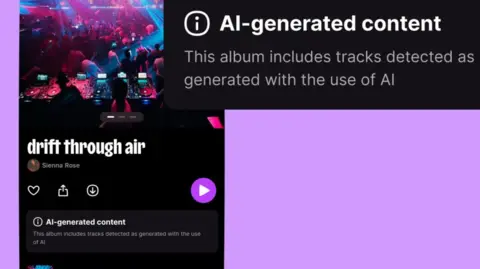

Widely suspected AI acts like Sienna Rose, Breaking Rust and The Velvet Sundown are essemtially treated like any other artists by Spotify, even as the platform removes what it considers AI-related spam such as mass uploads and short tracks designed to game the system.

"Our priority is addressing harmful uses [of AI] like spam and impersonation, rather than trying to filter music based on how it was made," a Spotify spokesperson said, adding AI in music also isn't a binary category but exists on a spectrum.

Deezer

DeezerDeezer – a smaller competitor to Spotify – has taken a stronger approach.

Last year it began both tagging albums that contain AI generated tracks produced by Suno, Udio and similar, and excluding the tracks from algorithmic recommendations or human-made playlists.

It uses its own in-house detection technology based on training AI models to spot statistical patterns in the sound itself, and recently began offering it for sale across the industry.

"We're the only music streaming platform that has that in place," notes Jesper Wendel, its head of global communications.

In March, Apple Music said it was introducing "transparency tags" and would eventually require that music labels and distributors self-disclose when new songs or related content involve AI.

But, as with Spotify's song credit features, critics point out those are unlikely to be reliable as artists may rather not disclose AI use for fear of stigma – and how visible Apple's tags will be to listeners remains unclear.

Getty Images

Getty ImagesThat AI music exists on a continuum does make labelling difficult, says Maya Ackerman, an expert in AI and computational creativity at Santa Clara University in California and co-founder and CEO of WaveAI, which has an AI tool to help musicians write song lyrics.

While some tools are "prompt in, song out" – where AI labels would be straightforward – others are designed for co-creation, assisting with specific parts of the music-making process. If a musician uses those tools, at what point does that warrant a label?

And, Ackerman adds, even with tools like Suno and Udio, users can put a lot of their creative selves into the outputs – feeding in their own lyrics or spending many hours iterating on the song's sound.

"From a distance it looks like such an obvious 'yes, label AI music' but, once you zoom in, you realise it is a very complicated thing," she says.

There is also the technical challenge of accurately detecting AI-generated tracks, with potentially serious consequences if human musicians are falsely labelled as AI.

Even detecting fully AI generated music can be fraught notes Bob Sturm, who studies AI's disruption of music at the KTH Royal Institute of Technology in Sweden.

AI detection systems are trained on outputs from existing AI music generation tools, but as those tools improve the software must be continually retrained, leading to what he characterises as a kind of "AI music arms race".

It is a challenge, acknowledges Manuel Moussallum, Deezer's head of research, but the company's detection technology has so far maintained a low false positive rate, he says, and research to better understand hybrid cases, where AI is only partially used, is ongoing.

Yet others see such concerns as a distraction.

"There is a lobbying message to say 'we can't draw the line, and therefore we shouldn't do anything'," says David Hoffman, a professor at Duke University in North Carolina who studies the impact of AI-generated music on artists' livelihoods.

He argues platforms should at least label fully AI-generated tracks and assess the scale of the remaining issue from there.

And listeners appear to want labels: in the Deezer–Ipsos poll, around 80% of respondents said AI-generated music should be clearly labelled, though views on filtering were more divided.

"Listeners deserve awareness," says singer-songwriter Tift Merritt, who works with Hoffman as a practitioner-in-residence at Duke, citing the way we provide nutritional labels on food or tell consumers if it is organic.

What may really be stopping Spotify from embracing labelling and filtering is economics, speculate many.

Spotify is trying to optimise for platform growth, says Prey from Oxford. Keeping recommendation systems as "unencumbered and free to operate as possible" helps with that.

Detecting AI-generated content would add cost, Hoffman notes, and it may also be cheaper to serve up AI music.

Getty Images

Getty ImagesPast controversies fuel suspicion note critics. Spotify has, at various points, been accused of commissioning and promoting lower-cost music for background-style playlists – claims it denies.

"All tracks on our platform are delivered by third-party rightsholders like labels and distributors, and the payment model is the same for all of them: royalties are paid out of the revenue pool based on listening share," a Spotify spokesperson said.

Meanwhile the area is evolving.

The music industry's standards body, DDEX, is continuing to work on a broad industry standard for AI disclosures in music credits, though display will depend on the streaming platforms.

And certain AI-generated content is required to be labelled from August 2026 under the EU AI Act; though how Spotify will implement those rules remains unclear.

It feels like the "Wild West" for AI music right now says David Hesmondhalgh, professor of media, music and culture at the University of Leeds.

But he also expects "some kind of order will emerge", as the early-2000s file-sharing panic ultimately led to today's streaming industry.

And Spotify appears to be recognising the pressure, recently announcing features aimed at elevating human artistry, including SongDNA and "About the Song" which give premium users deeper insight into a track's origins and contributors.

"We believe the right response to AI in music isn't any single policy, it's a combination of proactive controls, industry-wide standards, and a deeper investment in the human creativity behind every track," added the Spotify spokesperson.

More Technology of Business