AI sped up James Webb Space Telescope data analysis from years to days. What can it do for the groundbreaking Rubin Observatory?

Breaking space news, the latest updates on rocket launches, skywatching events and more!

By submitting your information you agree to the Terms & Conditions and Privacy Policy and are aged 16 or over.You are now subscribed

Your newsletter sign-up was successful

Want to add more newsletters?

An account already exists for this email address, please log in. Subscribe to our newsletterAI image processing has sped up analysis of data from NASA's James Webb Space Telescope from years to mere days or less, ushering in an avalanche of ground-breaking discoveries that may otherwise never have been made.

And now, the technology will be used to enhance the quality of images taken by the Chile-based Vera C. Rubin Observatory, the newest astronomy power house, to make them appear as sharp as if they have been taken from space.

The Vera C. Rubin Observatory, named after the American astronomer who discovered one of the key pieces of evidence for the existence of dark matter, sits atop the 8,770-feet (2,673 meters ) Cerro Pachón in the Chilean Andes. The telescope began operations last year. It scans the entire sky every three nights, aiming to create a 10-year timelapse of the motions of objects in the sky.

Its position in Chile's Atacama Desert, the most parched region on the planet, allows the observatory to benefit from a dry atmosphere and a year-round clear sky. Still, Rubin's observations suffer from significant distortions, as light from distant celestial objects must pass through Earth's atmosphere before it hits the telescope's detectors.

A new AI algorithm developed by researchers from the University of California, Santa Cruz (UCSC) will now attempt to remove this distortion and increase the resolution of the images to make them look as if they have been taken from space.

"Ground-based telescopes suffer from blurring owing to atmospheric turbulence as the light comes through," Brant Robertson, a professor of astronomy and astrophysics at UCSC, whose team developed the new AI model, told Space.com. "We spend a lot of money on high-performance technology to remove that atmospheric distortion, but we can also train AI machine learning models to take out some of that blurring."

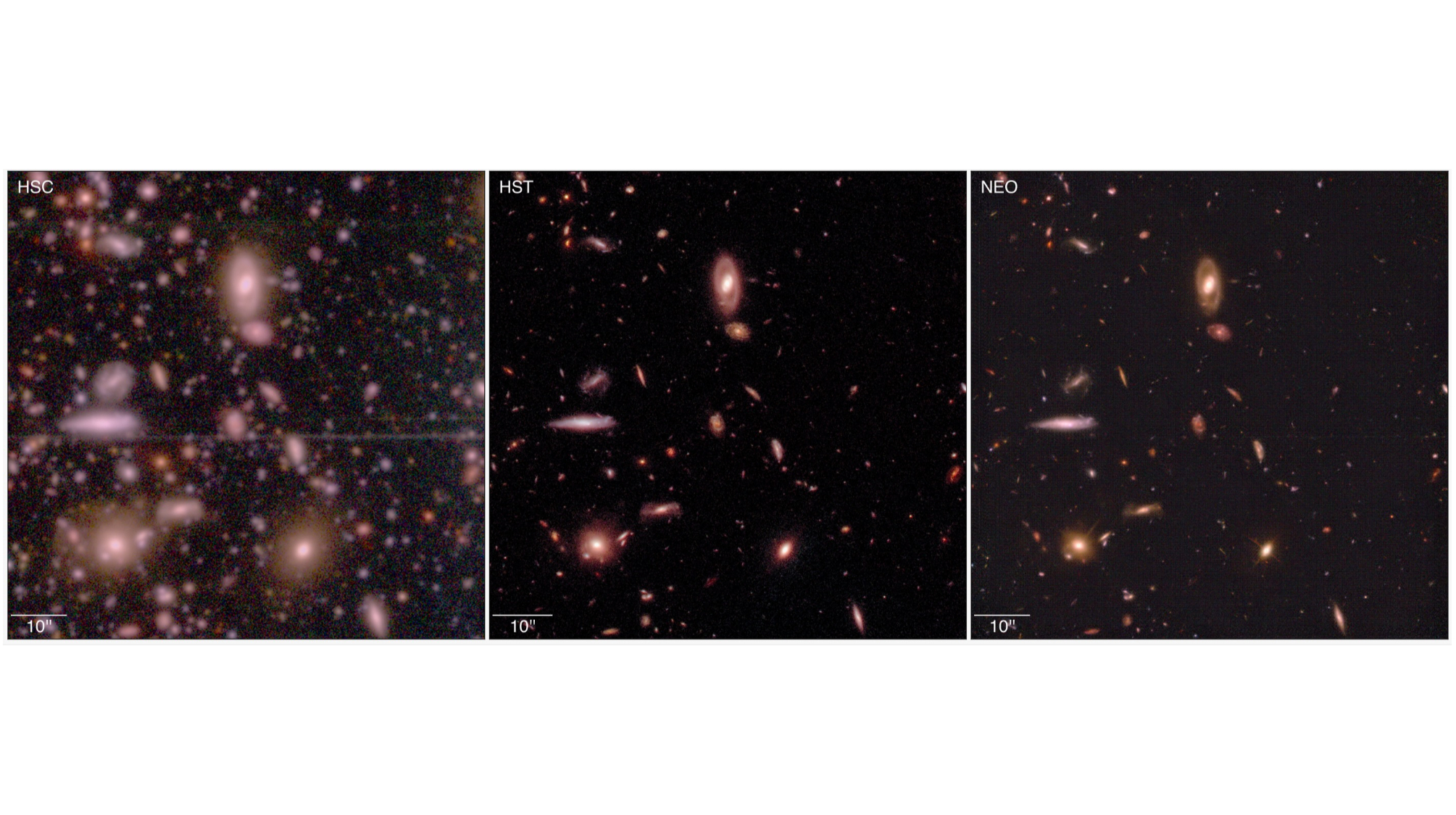

The researchers trained the generative model, called Neo, using images taken by the Subaru Telescope in Japan and snaps of the same sections of the sky captured by the Hubble Space Telescope. The task for the model was to learn how to fill the details missing in the images taken from Earth. The results were impressive. The researchers said in a paper that the Neo model "improves the accuracy of measured morphological parameters by factors of 2-10."

Get the Space.com NewsletterIn practice, that means an increased resolution that reveals a vast quantity of individual stars and precise shapes of galaxies where before one would find only vague smudges.

"The model improves the spatial quality of that data and recovers, in a statistical sense, the properties of galaxies that you see in these images as if they were seen by a telescope in space," Robertson said.

The technology, he added, super-charges discovery and enables the scientific community to maximize the scientific return on money invested into cutting-edge astronomical telescopes. The Vera C. Rubin Observatory in Chile, fitted with a 27.6-foot (8.4 m) mirror, cost $800 million to build. That, however, is still only a fraction of the cost of space-based telescopes such as Hubble and James Webb, both of which cost billions to build and operate.

"We spend a lot of money, huge amounts of resources, on astronomical observatories, and we would like to leverage that investment by the public and by the community to get everything that we can out of the data," said Robertson.

The Neo model is a Conditional Generative Adversarial Network, a collaboration of two neural networks, frequently used for AI image generation. In the case of Neo, the first network generates improved images from the captured photographs; the other evaluates their quality.

The model is based on an earlier technology Robertson's team developed to speed up processing of images from Webb. The $10 billion astronomical powerhouse produces such vast quantities of data that it's impossible to keep on top of it using just visual assessment by human astronomers. AI algorithms, like the one developed by Robertson and his colleagues, accomplish what would have taken humans years, in mere days.

"We're being inundated with such an amount of data that it's very difficult to keep up with," said Robertson. "Our standard approaches to analyzing these images are just really not sufficient."

The algorithm, running on NVIDIA's GPU-powered supercomputers, has made some of the most jaw-dropping discoveries in the Webb era, including spotting complex galaxies in the earliest universe, which astronomers did not expect.

"The model analyzes every pixel and distinguishes whether it's part of the sky or a part of an object," said Robertson. "And if it's an object, is it a part of a disk galaxy or a spheroid galaxy or a part of a star?"

Robertson added that the algorithm is not replacing astronomers. Rather, it helps them make discoveries faster, and also detect patterns that they might overlook.

"AI is not going to be pure or complete, but of course, neither are humans and traditional methodologies. They all have different strengths and benefits," he said.

The astronomers are making the processed images available to other teams and the public to explore.

The paper describing the Leo model, which will help improve the resolution of images from the Vera Rubin Observatory, has been accepted for publication in the Astrophysical Journal.

Tereza is a London-based science and technology journalist, aspiring fiction writer and amateur gymnast. She worked as a reporter at the Engineering and Technology magazine, freelanced for a range of publications including Live Science, Space.com, Professional Engineering, Via Satellite and Space News and served as a maternity cover science editor at the European Space Agency.

View MoreYou must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.

Logout MORE FROM SPACE... 1NASA wants to use a fleet of MoonFall drones to scout the lunar south pole: 'We believe we can do it'

1NASA wants to use a fleet of MoonFall drones to scout the lunar south pole: 'We believe we can do it'- 2SpaceX launching powerful Falcon Heavy rocket today for 1st time in 18 months: Watch it live

- 3The 'Oscars of Science': Breakthrough Prize 2026 awards over $18 million for discoveries across space, physics and more

- 4The moon as you rarely see it: How a photographer captured night and day on the first quarter moon

- 515 expert-checked places to see the 2026 total solar eclipse in Spain, Iceland and Greenland

Схожі новини

Їжа, що обманює мозок: які продукти провокують переїдання та постійний голод

#ScientistAtWork 2026: <i>Nature</i> seeks striking photographs that capture researchers at work

Вчені виявили, що рослини вміють "кричати": просто ми досі їх не чули